Along with the usual bug fixing, maintenance, and upstream version enablement work that goes into every Charmed Ceph release, we also worked on a number of new features in this cycle.

Stand-alone NFS shares

In Ceph, you can use CephFS for your network file share needs, but sometimes there are applications or use cases that require NFS. In Charmed Ceph we now have the option to deploy the ganesha plugin to provide NFS shares from a Ceph cluster, and whilst this was available before for Openstack with Manila, it is now fully stand-alone and has no dependencies on Openstack, perfect if you are deploying Ceph as a stand-alone storage system.

To meet our scalability goals we decided to allow the charms to be deployed in two different ways. For simplicity, multiple shares can be presented from one pair of ganesha units. For better scaling and workload isolation, a single share can be mapped to a single pair of ganesha units, and for subsequent shares, additional ganesha units will be deployed.

It was designed in this way so that users would not become bottlenecked by the performance of a pair of ganesha units handling all NFS traffic, nor experience noisy-neighbour effects between heavy NFS users.

We maintain high-availability of the NFS end-point by deploying pairs of ganesha units with corosync/pacemaker handling failover between them.

Note: the ceph-nfs charm is still in tech-preview.

Drive replacement improvements

Previously, replacing a disk was quite an involved process, requiring the tracking down of OSD IDs, UUIDs for the underlying disk, and if using bache, IDs for the caching device too.

In this release, we have streamlined the process to be straightforward and repeatable. The most important thing that this improvement brings is a higher level of safety, by reducing the risk of mixing up device IDs, and inadvertently affecting another fully functional OSD.

PMEM Investigations

In this cycle we also did some investigative work into PMEM and the potential benefits it may bring as a host side cache, using the RBD persistent write-back cache feature.

Persistent memory (or NVDIMM) is a class of storage device that resides in one or more DIMM slots of a server, but unlike normal RAM, any data written to an NVDIMM persists through server reboots. Given their location on the server mainboard they have very low latency and very high IO capabilities. Perfect for caching the most frequently accessed data!

It’s important to note that this is a relatively new feature, and one needs to be very selective about the scenarios in which it can be used. For example, if the server fails and becomes unrecoverable then the data in the cache is lost. The cache is structured so that the image/volume on the ceph cluster can be considered crash-consistent, but if the cache contains data yet to be flushed to ceph, then there is a guaranteed data loss.

Where we see this feature being useful is for highly parallelised batch processing work loads, where the loss of one part of the job isn’t catastrophic and can just be re-run.

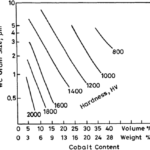

For testing purposes we built a Ceph cluster that was highly IO constrained. Just a single node with three spinning disk OSDs in LXD containers. We then created an RBD volume of 100GB and allocated 5GB of local PMEM to that volume.

In the first test, using fio configured for 50/50 read/write without the cache in place, we were only able to achieve just over 1000IOPs. In the second with the cache in use, we were able to achieve over 27000 IOPs, obviously a huge improvement as all the IO was completed locally.

An important observation, however, is that once the fio test had completed, the process didn’t exit for quite some time, as the outstanding IOs in the local cache still had to be flushed to the Ceph cluster.

Upstream Quincy

The upstream Quincy release really feels like Ceph has reached a maturity point where the community is not just chasing new features to have parity with other storage solutions, but adding a level of polish to the feature set that has existed for quite some time.

Here are some of the features that stand out in the release notes.

RGW rate limiting

Rate limiting is really quite interesting for organisations with a large number of users – especially when there are heavy users or buckets that are accessed frequently. Applying rate limits to either of those entities (users or buckets) will help share cluster resources more fairly, and avoid noisy neighbour effects where one user or workload monopolises cluster resources. Read and/or write operations and/or bytes per minute can be used when setting these limits.

Farewell Filestore

Hopefully there are no Ceph users still deploying OSDs based on filestore, given the improvements that BlueStore brought us in terms of performance, reliability and scalability. But either way, from now on they are fully deprecated in Quincy.

LevelDB Support

Ceph Quincy no longer supports LevelDB – so it is important to remember to migrate MONs and OSDs to RocksDB prior to any upgrade to Quincy. For OSDs this will mean destroying and redeploying OSDs, and likewise for the MONs, you will need to deploy new MONs and add them to the cluster one by one, whilst removing the old MONs as quorum is reached. Just be careful that no external applications have hard-coded IP addresses to the existing MONs. Those applications won’t lose access to the cluster right away, but you may run into issues after a host reboot.

Learn more

Discover more from Ubuntu-Server.com

Subscribe to get the latest posts sent to your email.