GNU Wget is a free tool that allows you to download files from the internet using the command-line. Wget has a lot of features, including the ability to download multiple files, limit bandwidth, resume downloads, ignore SSL checks, download in the background, mirror a website, and more.

This article demonstrates the different options available using the wget command.

Wget Syntax

Wget takes the following simple syntax.

$ wget [options] [url]

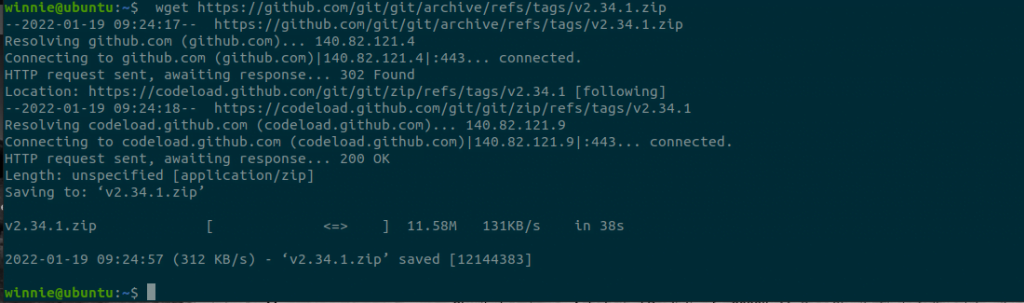

1. Download a file

With no command options, you can download a file with the wget command by specifying the URL of the resource as shown.

$ wget https://github.com/git/git/archive/refs/tags/v2.34.1.zip

2.

Download Multiple files

When it comes to downloading multiple files, you need to create a text file and list the URLs of the resources to be downloads. The text file will act as an input file from which wget will read the URLs

In this example, we have saved a few URLs in the multipledownloads.txt text file

Next, download the files using wget with -ioption as shown. With the-i option, wget reads from the input file and downloads the resources defined in the input file.

$ wget -i multipledownloads.txt

3. Download files in the background

To download files in the background, use the wget command with -b option. This option comes in handy when the file is large and you need to utilize the terminal for something else.

$ wget -b https://github.com/git/git/archive/refs/tags/v2.34.1.zip

To view the output of the download, view wget logs with the command:

$ tail -f wget-log

4. Resume a download

In some cases, when we start a download the internet becomes unavailable. We can use the wget command’-c’ to resume our download from the point when it became unavailable. The following is an example.

$ wget -c https://download.rockylinux.org/pub/rocky/8/isos/x86_64/Rocky-8.4-x86_64-minimal.iso

5. Saving downloaded file under a different name.

Use the wget command with the -o option followed with the desired name of the file as follows:

$ wget -o git.zip https://github.com/git/git/archive/refs/tags/v2.34.1.zip

The file is saved as git.zip in the example above.

6. Download file under a specific directory

The wget commands save downloads in the current working directory. To specify a location use the -P option followed with path to directory.

$ sudo wget -P /opt/wordpress https://wordpress.org/latest.tar.gz

7. Set the download speed

By default, the wget command attempts to use all available bandwidth. However, if you are using a shared internet connection, or trying to download a large file you can use the ‘ –limit-rate‘ option to cap the download speed to a specific value. You can set the speed in kilobytes ( k) , Megabytes ( m ) or Gigabytes ( g ).

In this example. We have set the download speed to 100Kilobytes.

$ wget --limit-rate=100k http://download.virtualbox.org/virtualbox/rpm/rhel/virtualbox.repo

8. Mirror entire website

Use the -m option with wget to create a mirror of a website. This creates a local copy of the website on your system for local browsing.

$ wget -m https://google.com

You’ll need to provide a few extra parameters to the command above if you wish to browse the downloaded page locally.

$ wget -m -k -p https://google.com

The -k option instructs wget to transform links in downloaded documents so that they can be viewed locally. The -p options provides all the

essential files for displaying the HTML page.

9. Ignore SSL Checks

Use the —no-check-certificate option to download a file over HTTPS from a server with an incorrect SSL certificate.

$ wget --no-check-certificate https://website-with-invalid-ss.com

10. Increase number of retries

Incase of a network interruption, wget command attempt to re-establish the connection. By default, it tries 20 times to successfully complete the download. The ‘–tries‘ option increases the number of retry attempts.

Here, we have set the number of retries to 75 attempts.

$ wget --tries=75 https://download.rockylinux.org/pub/rocky/8/isos/x86_64/Rocky-8.4-x86_64-minimal.iso

Conclusion

Wget is a very useful tool for downloading files. For further information, check out the documentation.

Karim Buzdar holds a degree in telecommunication engineering and holds several sysadmin certifications including CCNA RS, SCP, and ACE. As an IT engineer and technical author, he writes for various websites.

Discover more from Ubuntu-Server.com

Subscribe to get the latest posts sent to your email.